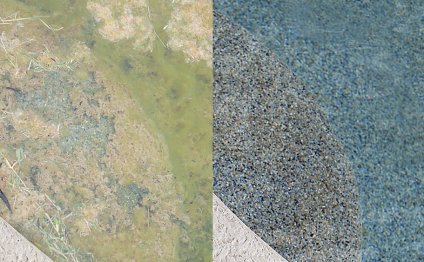

Green to Blue pool

An important way of reducing the danger of deployments is known as Blue-Green Deployments. When we call the present live manufacturing environment “blue”, the method is made from bringing up a parallel “green” environment aided by the new version of the program and once everything is tested and ready to get live, you simply change all user visitors to the “green” environment, leaving the “blue” environment idle. Whenever deploying into cloud, it's quite common to after that discard the idle environment when there is no significance of rollbacks, specially when using immutable machines.

If you work with Amazon internet solutions (AWS) as the cloud supplier, there are some options to implement blue-green deployments based your system’s architecture. Since this method hinges on carrying out an individual switch from “blue” to “green”, your preference will depend on the way you are offering content in your infrastructure’s front-end.

Solitary EC2 instance with Elastic internet protocol address

In simplest situation, all your public traffic is being offered from one EC2 instance. Every example in AWS is assigned two internet protocol address details at launch - a private IP that's not reachable from the web, and a public IP this is certainly. But if you terminate your instance or if perhaps any failure does occur, those IP addresses tend to be introduced and you will not be able to get them back.

An Elastic internet protocol address is a fixed IP address allocated to your AWS account to designate as the public internet protocol address regarding EC2 example you own. You may want to reassign it to another instance on need, by simply making a simple API telephone call.

Within our instance, Elastic IPs are the simplest option to implement the blue-green switch - launch a fresh EC2 example, configure it, deploy the latest version of the body, test that, as soon as it really is prepared for manufacturing, just reassign the Elastic IP from the old example to your brand new one. The switch will likely to be transparent to your users and traffic may be rerouted practically straight away into new example.

Multiple EC2 instances behind an ELB

If you should be providing content through a load balancer, then the exact same strategy would not work because you cannot connect Elastic IPs to ELBs. In this scenario, the current blue environment is a pool of EC2 cases and also the load balancer will route requests to virtually any healthy example in the share. To perform the blue-green switch behind equivalent load balancer you ought to change the whole share with a brand new set of EC2 instances containing the latest form of the application. There's two methods to try this - automating a few API telephone calls or using AutoScaling teams.

Every AWS service features an API and a command-line customer which you can use to manage your infrastructure. The ELB API allows you to register and de-register EC2 cases, that may either add or remove them through the pool. Doing the blue-green switch with API phone calls will require that register the new "green" instances while de-registering the "blue" cases. You may also perform these calls in synchronous to switch faster. However, the switch will not be immediate since there is a delay between registering a case to an ELB and ELB beginning to path demands to it. The reason being the ELB just routes needs to healthy cases and contains to execute several wellness inspections before taking into consideration the new instances as healthier.

Others option is to make use of the AWS service known as AutoScaling. This allows one to define automatic principles for triggering scaling events; either increasing or lowering the number of EC2 instances within fleet. To utilize it, you need to determine a launch configuration that specifies just how to produce brand-new cases - which AMI to utilize, the example type, safety group, user data script, etc. You'll be able to use this launch configuration to create an auto-scaling team defining the number of instances you need to have within group. AutoScaling will launch the required few circumstances and constantly monitor the team. If an example becomes unhealthy or if perhaps a threshold is crossed, it'll add cases towards team to replace the bad ones or to scale up/down considering need.

AutoScaling teams could be connected with an ELB and it will look after registering and de-registering EC2 circumstances on load balancer any time an automatic scaling occasion does occur. But the association can simply be performed if the team is first-created rather than after it is operating. We can use this feature to make usage of the blue-green switch, however it will require a couple of non-intuitive measures, detailed right here:

- Produce the launch setup for the brand-new “green” type of your computer software.

- Create an innovative new “green” AutoScaling team utilising the launch configuration from 1 and connect it with similar ELB this is certainly offering the “blue” circumstances. Wait for brand-new circumstances to be healthy and get subscribed.

- Update the “blue” team and put the required number of circumstances to zero. Wait for old circumstances to-be ended.

- Erase the “blue” AutoScaling team and launch configuration.

This procedure will keep up with the same ELB while replacing the EC2 circumstances and AutoScaling group behind it. The key downside for this method could be the delay. You need to wait for brand new cases to start, for AutoScaling group to think about all of them healthy, the ELB to consider them healthier, then when it comes to old circumstances to terminate. As the switch is happening there's a period when the ELB is routing needs to both “green” and “blue” instances which may have an undesirable impact for your people. Due to that explanation, I would personally most likely not use this approach when performing blue-green deployments with ELBs and instead think about the next choice - DNS redirection.

RELATED VIDEO

Share this Post

Related posts

Electric Showers

If you know how it feels to put up with the odd shower that does not hold a stable temperature and makes you slouch miserably…

Read MorePool Maintenance Tips

Pretty soon swimming pool holders will face the annual problem: opening a backyard swimming pool for the summer period. The…

Read More